API Monitoring test steps

API tests consist of a series of steps, most often HTTP requests. In addition to requests, you can also add additional types of steps to your tests like pauses and conditions.

The Editor is where you'll define the steps (HTTP requests, pauses, etc.) and execution order that make up a test. For each request in a test, you can specify the HTTP request data, assertions, variables and scripts by clicking on a request.

- HTTP request step

- Pause step

- Incoming request step

- Curl step

- Condition step

- Conditional loop step

- Subtest step

- Browser test (Ghost Inspector) step

- Working with test steps

HTTP request step

Click Request to add an empty request template to the end of your test. Replace the placeholder data with the method, URL, headers and parameters for the API your test needs to call.

Request steps can be imported from other tools like Swagger, AWS API Gateway and Postman.

Request lifecycle

When a request step is executed each of the associated assertions, variables and scripts will be processed. The execution order is as follows:

- Pre-request Scripts are executed. The variable context from initial/variables and scripts and previous steps are available via

variables.get(). - The HTTP request is executed and a response is returned.

- Variables defined in the editor are processed on the response.

- Post-response Scripts are processed. Initial and request-specific variable values extracted from previous steps are available for use.

- Assertions defined in the test editor are processed on the response. If the

responseobject was modified by a Post-response Script, the data is not available to be evaluated by an Assertion.

Pause step

Pauses are a type of test step that allow you to introduce short delays between steps in the test plan. You can add as many pauses as you need, but tests require at least one request to execute. If your test is set to use a schedule, take care that the total amount of execution time is less than your test's schedule interval.

Click Pause to add a step to your test to pause test run execution for a brief period of time. Pause duration can be from 1-180 seconds long. Pauses are not guaranteed to be exact, but durations are guaranteed to be at least the amount of time specified.

Incoming request step

Incoming requests allow you to pause execution of a test run until a request is received at a unique URL. This is useful for testing webhooks or other asynchronous HTTP callback methods.

Important considerations for incoming steps

- The response to the incoming request will be a 200 OK with an empty response body.

- The max wait time for an incoming request is 10 minutes.

- If you make an incoming request (ie. use a request's unique URL) before reaching the incoming request step in a test run, we'll store the data for up to 30 seconds.

- Tests with incoming steps should only be executed from a single location, on a schedule that is longer than the expected response time window. Simultaneous test runs may cause unexpected results.

Curl step (experimental)

Click From curl to create and add requests using a curl command. Supported options include -d, --data, --data-urlencode, -F, --form, -H, --request, -X, -b, --cookie, --user, -u. Unsupported options are ignored. File uploads are not supported.

Condition step

Condition steps are a way to conditionally run select test steps based on criteria you define. If the condition assertion evaluates to True, test steps embedded within a condition are executed. Otherwise, embedded test steps are skipped.

To create a new condition, click Condition, and drag any test steps you want conditionally executed into it. A condition assertion is an expression made up of a left operand, a comparison operator, and a right operand. When you define your condition assertion, you can hardcode the values or use any variables you've previously defined in your test or shared environment settings. To include the value of a variable in a condition assertion, enter the name of the variable surrounded by double braces e.g. {{variable_name}}

Assertion comparisons in conditions behave the same way they do in request steps, and you have access to all the same comparison operators you would in request steps. See the assertions comparison chart for more details about each type of comparison operator.

Conditional Loop step

Conditional loops (not to be confused with condition) are useful when you want to repeatedly make an API call to the server until you get a favorable response. For example, if you want to run calls until a certain status code is reached, or if you only want to send calls to a test suite with multiple iterations.

The following limitations apply to the conditional loop step:

-

Timeout of 11 minutes: The loop will stop if it runs longer than 11 minutes.

-

Maximum of 100 iterations: The loop will be halted if it exceeds 100 iterations.

Using conditional loops

To use conditional loops:

-

Under Add Step, click Conditional Loop.

-

For each conditional loop (the While box), define the API Monitoring variable you want to conditionalize as a left operand, and a different variable or hardcoded values (when applicable) as a right operand. Enter the name of the variable surrounded by double braces e.g.

{{variable_name}}.Comparison operators include:

-

equals/does not equal

-

is empty/is not empty

-

contains/does not contain

-

is a number

-

equals (number)

-

less than/greater than

-

less than/greater than or equal to

-

has key

-

has value

-

is null

-

- Optional: Add substeps such as Pause, Incoming Request or Subtests into the conditional loop by clicking on the step and dragging them into the conditional loop box. You can also change the execution order by dragging and reordering the substeps.

After running the test, you can find details under each conditional loop, showing the test result and reason for each assertion's pass or failure. When using conditional loops, both test_result_uuid and template_uuid will remain the same for all loop iterations.

Example

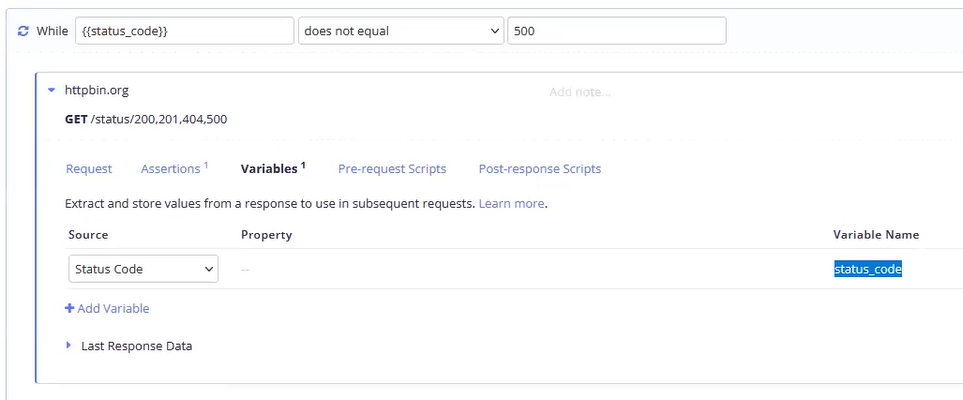

Let's say you want to set a conditional loop to run the test until you get a status code that equals 500. The first thing to do is to set up a variable that extracts a value from the response, in this case, its status code. Go to Request Step > Variables to define a status_code variable.

In the While statement, enter the status_code variable in the left operand and input 500 in the right operand. Set the comparison operator to does not equal, which will have the conditional loop run indefinitely until the status code finally equals 500.

Skipping loops

You can skip conditional loops by using the dot button on the top-right of a conditional loop box.

To unskip and put the loop back in the test, click the dot again:

Subtest step

Subtest steps can run other BlazeMeter API Monitoring tests as part of a test run. This is useful for reusing tests that perform common functionality like generating a new access token, setup/teardown or creating suites or groups of tests.

Environments

A subtest step can use environment settings from the parent test, the subtest, or any shared environment in the parent test's bucket.

Locations and agents

Subtest steps always use the location based on the location settings in the selected environment.

Assertions, variables & scripts

When a subtest completes, a JSON object representing the result is made available to run Assertions, Variables and Scripts against. You can validate the resulting subtest run was correct and extract data from the variables object (the end result of the subtests's variable state) just like any other JSON payload. The JSON object you get back is the same as a webhook notification payload.

Passing parameters to subtests

By default, all of the selected environment's initial variables are passed to the subtest. To pass additional data, add 'Parameters' in the subtest step editor. These values will be passed to the subtest's initial variables, overriding any initial variable values with the same name.

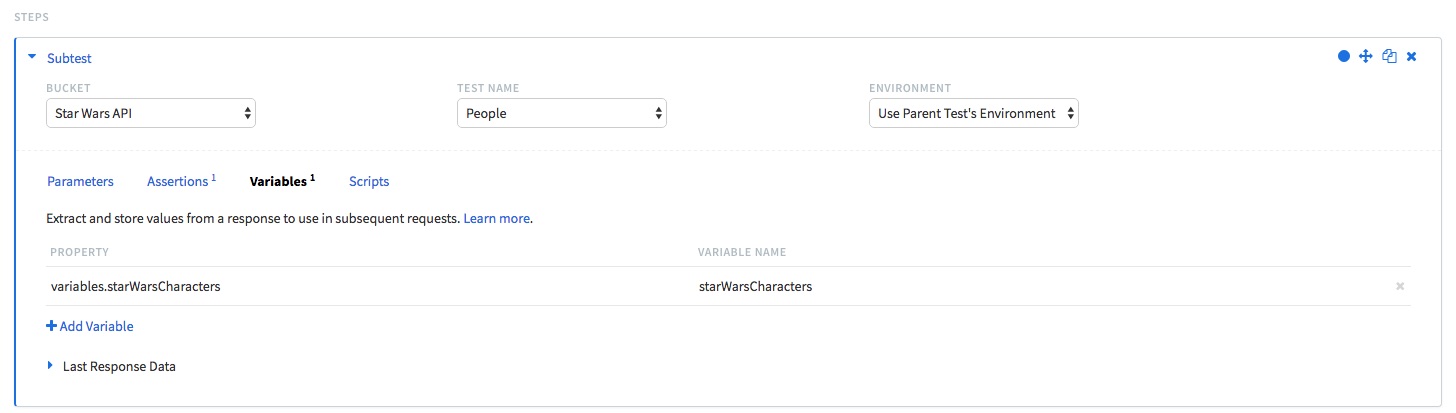

Getting variables from subtests

You can use the "Variables" tab in a subtest step to extract variables that were create inside that subtest. Under "Property", you can access them by using variables.variable_name_in_subtest. For example:

You can nest loops by using Subtests, allowing a test to repeat specific steps within a larger workflow. This provides greater flexibility in test execution and data validation.

Some things to consider when using nested loops in Subtests:

-

Be mindful when configuring environment variables in Subtests. Variables passed to a Subtest may be overridden by test or global environment settings.

-

The 11-minute timeout applied to a parent loop still applies when there are nested loops within a Subtest. This prevents infinite loops.

Browser test (Ghost Inspector) step

When your team is connected with a Ghost Inspector account, a new step type is available to add to your tests: UI test. This is useful if your API requires a sign in step before requests can be issued. Learn more in the Ghost Inspector integration guide.

Working with test steps

Create a test from steps

You can now create a new test directly from selected test steps in the API Monitoring editor. This is especially useful for splitting large, imported collections into smaller, modular tests.

To create the new test:

-

Open a test in the API Monitoring editor.

-

Select one or more test steps from the list.

-

In the Actions dropdown (above the step list), select Create a test from Steps.

-

A pop-up window will appear with the following fields:

-

Test name: (Required) Enter a name for the new test.

-

Bucket: Select the bucket where the new test will be created.

-

Copy Test Settings (Test Environments): Enable this to copy local test environments from the original test.

Note that Shared Environments are not copied.

-

Delete selected steps after the copy?: Enable this if you want the original test steps to be removed after duplication.

-

-

Click OK to confirm or Cancel to abort.

Limitations

-

If you select a different bucket, only the local environment settings will be copied (if enabled). Shared Environments cannot be copied across buckets.

-

Test schedules are not duplicated during this action.

-

This action is available under the “Duplicate Steps” entry in the Actions menu.

Changing execution order

To change the execution order of requests within a test, drag the Reorder icon for a given request and drop it where you want it to be executed. The new order will be used on subsequent test runs. If you are using variables in your request data, take care to make sure that the request that defines a given variable comes before the request(s) that use it.

Test step actions

You can skip, unskip, duplicate, and delete requests using the icons on the top right of each request box.

To perform action on multiple requests, select the checkbox next to each step and use the Actions drop-down to perform actions on all selected steps.

-

Skip: To skip an existing request in a test, either click the Skip blue circle icon, or select the checkbox on the top right of the request, then select Actions > Skip Steps.

-

Unskip: To unskip an existing request in a test, either click the Unskip white circle icon, or select the checkbox on the top right of the request, then select Actions > Unskip Steps.

-

Duplicate: To create a copy of an existing request in a test, either select the Duplicate Request icon, or select the checkbox on the top right of the request, then select Actions > Duplicate Steps. The new request(s) will be added to the end of the test.

-

Delete: To delete an existing request in a test, either click the Delete icon, or select the checkbox on the top right of the request, then select Actions > Delete Steps.